Imagine waking up one day and finding out that a computer has quietly decided you are “suspicious.”

No arrest. No notice. No phone call. Just a silent mark against your name inside a government database — and you have absolutely no idea it has happened.

This is not a scene from a sci-fi movie. This is the reality that Operation Gandiv AI is quietly building across India right now.

In this article, we explain exactly what Operation Gandiv AI is, how it works, and why every single Indian — whether you live in Mumbai or a small village in Bihar — needs to know about it.

Table of Contents

1. What Is Operation Gandiv AI? Let’s Start From the Beginning

Think of it this way.

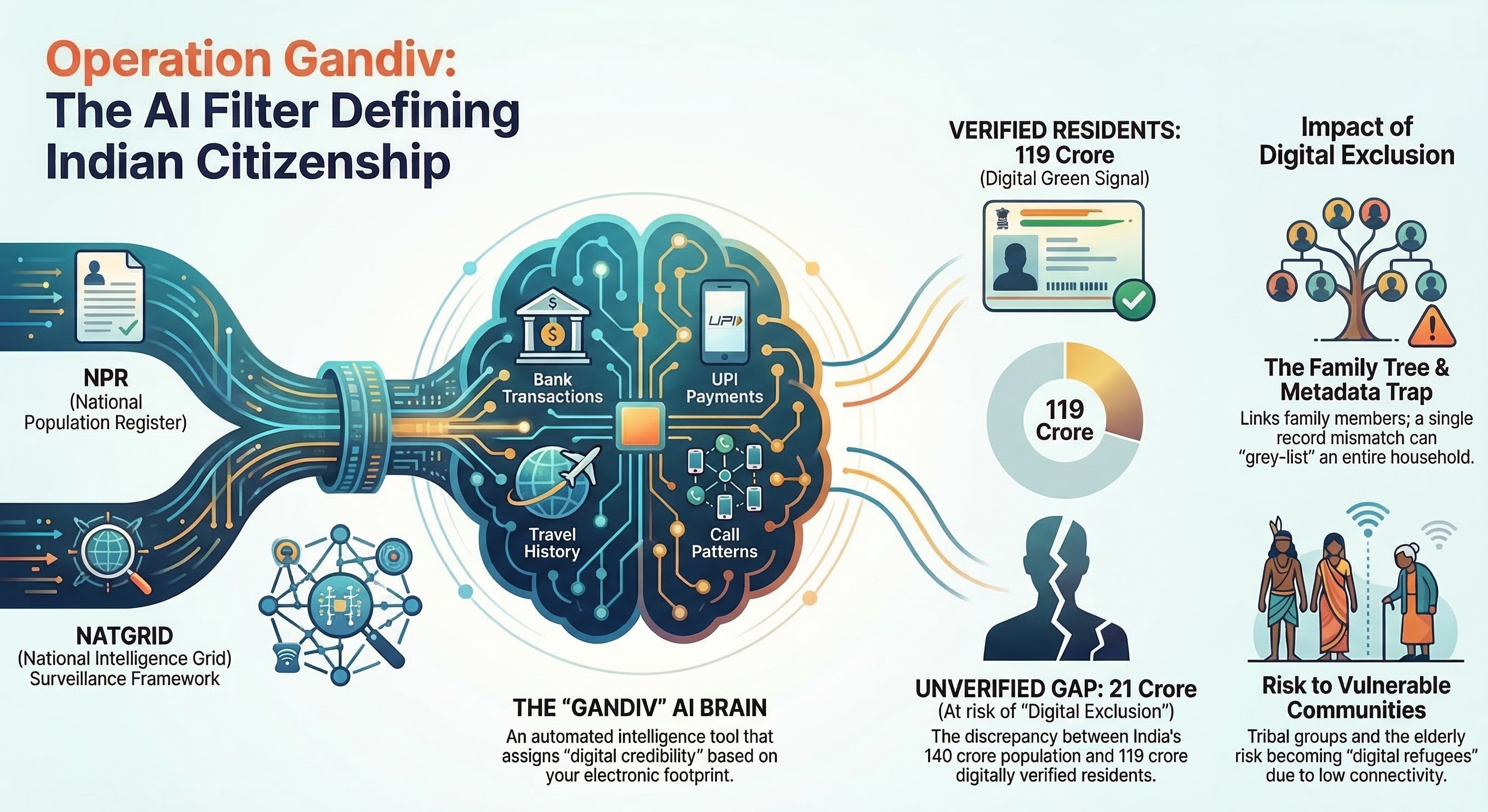

Your whole digital life — your bank transactions, your phone calls, your travel history, your UPI payments — is like scattered puzzle pieces sitting in different government offices. One piece is with your bank. Another is with the telecom company. Another is with the railway department.

NATGRID — the National Intelligence Grid — collects all those pieces and puts them together in one place.

And Operation Gandiv AI is the brain that looks at that complete picture and judges you.

Its job is not to track a few criminals or terrorists. Its job is much bigger. It wants to verify every single one of India’s 140 crore people by connecting the National Population Register (NPR) with NATGRID data.

📌 Simply put: For the first time ever in Indian history, your citizenship data and national security data are being looked at together — by an artificial intelligence.

The name “Gandiv” comes from Arjuna’s powerful bow in the Mahabharata. And just like that bow, this system is designed to be fast, precise, and impossible to escape.

2. How Does Operation Gandiv AI Actually Judge You?

Here is the part that will really make you think.

The Operation Gandiv AI does not care about your name, your religion, or your caste. It does not ask who you are. It only asks one question:

“How clean is your digital life?”

The AI scans your data every single day. It looks at your UPI transactions, your bank activity, your phone call patterns, and your travel history. It is searching for anything that looks unusual.

What Gets You Flagged by Operation Gandiv AI?

→ You visited a location that the system considers suspicious → You received money from an unknown account with no clear reason → Your phone usage pattern suddenly changed in a way the AI does not expect → There are gaps in your digital activity that the system cannot explain

Now here is the scary part. Nothing happens immediately. There is no police knock on your door. There is no official letter.

Instead, the system quietly gives you a zero digital credibility score and marks you as “unreliable” deep inside a government database. You carry on with your life completely unaware — but the state now sees you differently.

⚠️ Think about this: You could be flagged as suspicious right now and have absolutely no way of knowing it.

3. The 21-Crore Problem Nobody Is Talking About

Here is a number that should shock you.

Out of India’s 140 crore people, the Operation Gandiv AI system has already divided the population into two groups:

✅ 119 Crore People — Safe and Verified

These are people whose digital data is clean, consistent, and easy for the system to track. Their Aadhaar is updated. Their SIM is in their own name. Their UPI transactions make sense to the AI.

❌ 21 Crore People — Flagged as “Non-Verifiable”

These are people the system cannot properly track or verify. Their data is missing, inconsistent, or simply not there.

Now the government says this 21-crore group is where illegal migrants and infiltrators are hiding. That may be partly true.

But think about who else is inside that 21-crore group.

The farmer in Rajasthan who has never used a smartphone. The 70-year-old grandmother in Odisha whose Aadhaar was made 10 years ago and never updated. The daily wage worker in Delhi whose SIM card is registered in his landlord’s name.

These are not infiltrators. These are Indians. And the Operation Gandiv AI system treats them exactly the same way.

4. The AI Is Building a Digital Family Tree of Every Indian

This is where things get even more interesting — and more worrying.

Operation Gandiv AI does not just look at you individually. It builds what you can think of as a digital family tree for your entire household.

How the Metadata Test Works

The AI runs an automatic check on small details — called metadata — that most people never even think about:

→ Is your SIM card registered in your own name or someone else’s? → When did you last update your Aadhaar? If it has been years, that is a red flag. → Do your family members’ records all connect properly to each other?

The Grey List Problem

Here is the part that affects entire families. If your father’s records are missing, or your wife’s Aadhaar does not link correctly — the whole family can end up on a “grey list.”

Not just the person with the problem. Everyone.

And this entire process happens automatically. No human being reviews it. No government officer calls you. A machine makes the decision and moves on to the next family.

📌 This is called “algorithmic governance” — when a computer makes decisions about your life that used to be made by human beings.

5. What About People Who Simply Do Not Use the Internet?

This is the question that keeps civil rights experts up at night.

Operation Gandiv AI is built on one big assumption — that everyone in India leaves a digital footprint. But the truth is, millions of Indians do not.

Who Gets Trapped by the Invisibility Problem?

→ Poor families in rural areas with no internet or smartphone access → Elderly citizens who have never used UPI or online banking → Tribal communities living in forests and remote areas → Nomadic groups who move frequently and have no fixed address → Daily wage workers whose SIM cards are often registered in someone else’s name

To the Operation Gandiv AI, all of these people look the same as someone who is deliberately hiding from the system.

The AI cannot tell the difference between a 65-year-old tribal woman in Chhattisgarh who has never owned a smartphone and a foreign infiltrator who is intentionally avoiding a digital trail.

Both of them show up as invisible on the system. Both of them get the same suspicious flag.

These people risk becoming what experts are calling “digital refugees” — Indians who have lived here their whole lives but are now invisible to their own government’s computers.

6. Your Citizenship Is Now Like a Subscription Service

Here is a way to think about what Operation Gandiv AI is really changing.

You know how a Netflix subscription works? As long as you keep paying, you keep access. The moment you stop, the service cuts you off.

Operation Gandiv AI is turning Indian citizenship into something similar.

Your citizenship is no longer a permanent right you were born with and will carry till you die. Under this new framework, it is something you must prove — every single day — through your digital activity.

What Happens When You Get Cut Off?

If the AI marks you as unverified, something very quiet but very serious happens. You are still physically inside India. But in the digital world — in the world of government databases, welfare schemes, and official records — you are now on the outside.

Your digital footprint — not your birth certificate, not your voter ID — now decides whether the state recognizes you as a citizen.

📌 Old India: Show your document. Prove who you are. 📌 New India: The AI watches your life 24 hours a day and decides for itself.

7. The Most Dangerous Question: Can an AI Delete Your Vote?

This is the part of the Operation Gandiv AI story that has the biggest consequences for Indian democracy.

Every few years, the Election Commission of India cleans up voter lists through a process called the Special Intensive Revision (SIR). The goal is to remove fake voters and outdated entries. Completely normal and necessary.

But here is the danger.

How Operation Gandiv AI Could Remove Real Voters

If the AI has flagged someone from the 21-crore non-verifiable group as suspicious — and that flag is then used to remove them from the voter list — then a computer program has just taken away an Indian citizen’s right to vote.

No judge ordered it. No politician signed a paper. A machine did it automatically.

And who is most at risk? The exact same people we already talked about — poor citizens, rural voters, tribal communities, the elderly — the people who are most likely to be in that 21-crore non-verifiable group because they simply do not have a strong digital presence.

🗳️ The real danger: Operation Gandiv AI could quietly turn a national security tool into a machine that removes millions of genuine Indian voters from electoral rolls — without a single human making that decision.

So What Does All of This Mean for You?

Let us bring this back to your daily life.

If your Aadhaar is updated, your SIM is in your own name, your UPI transactions are regular and make sense, and your family’s records all connect properly — you are probably in the 119-crore “safe” group. The AI sees you as a clean, verifiable Indian citizen.

But if any of those things are missing or inconsistent — even for reasons that have nothing to do with being illegal — you could already be in that 21-crore flagged group without knowing it.

Here is what you can do right now:

→ Update your Aadhaar if it has been more than 2-3 years → Make sure your SIM card is registered in your own name → Link your Aadhaar with your bank account if you have not already → Check that your family members’ documents are all consistent with each other

Conclusion: Are We Ready for an AI That Decides Who Belongs?

Operation Gandiv AI is not coming. It is already here.

India is quietly becoming what political thinkers call a “Digital Leviathan” — a state that knows everything about its citizens, judges them through algorithms, and decides their status based on the quality of their digital life.

The government’s goal is understandable. Identifying illegal migrants and strengthening national security is a legitimate aim. But when the tool you build is powerful enough to mark 21 crore people as suspicious — and when those 21 crore people include some of India’s most vulnerable citizens — the questions it raises are ones that affect every single one of us.

In the India that Operation Gandiv AI is building, your freedom may one day depend on a green signal from a machine.

The borders of this country are quietly moving — from the fences on our physical boundaries to the space between your bank account and your mobile phone.

And the most important question every Indian needs to ask right now is this:

In a country that belongs to its people — should a computer get to decide who those people are?

AI Tools for Cybersecurity: 7 Powerful Ways to Secure Your Digital Life